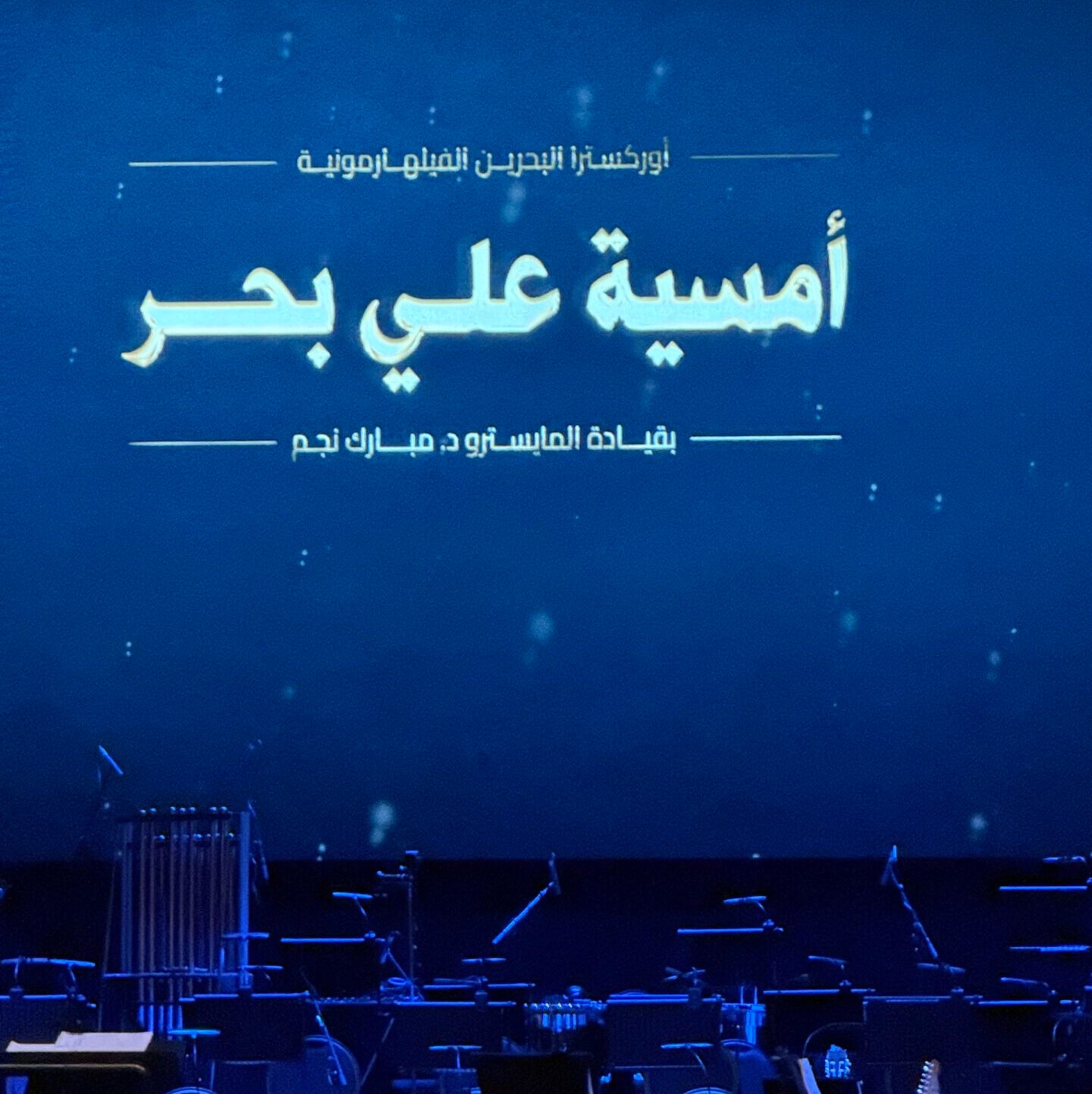

Yumma Warda Orchestra

YUMMA WARDA – ALI BAHAR ORCHESTRA

AI Generated Video • Storytelling • Event

Yumma Warda AI Generated Video

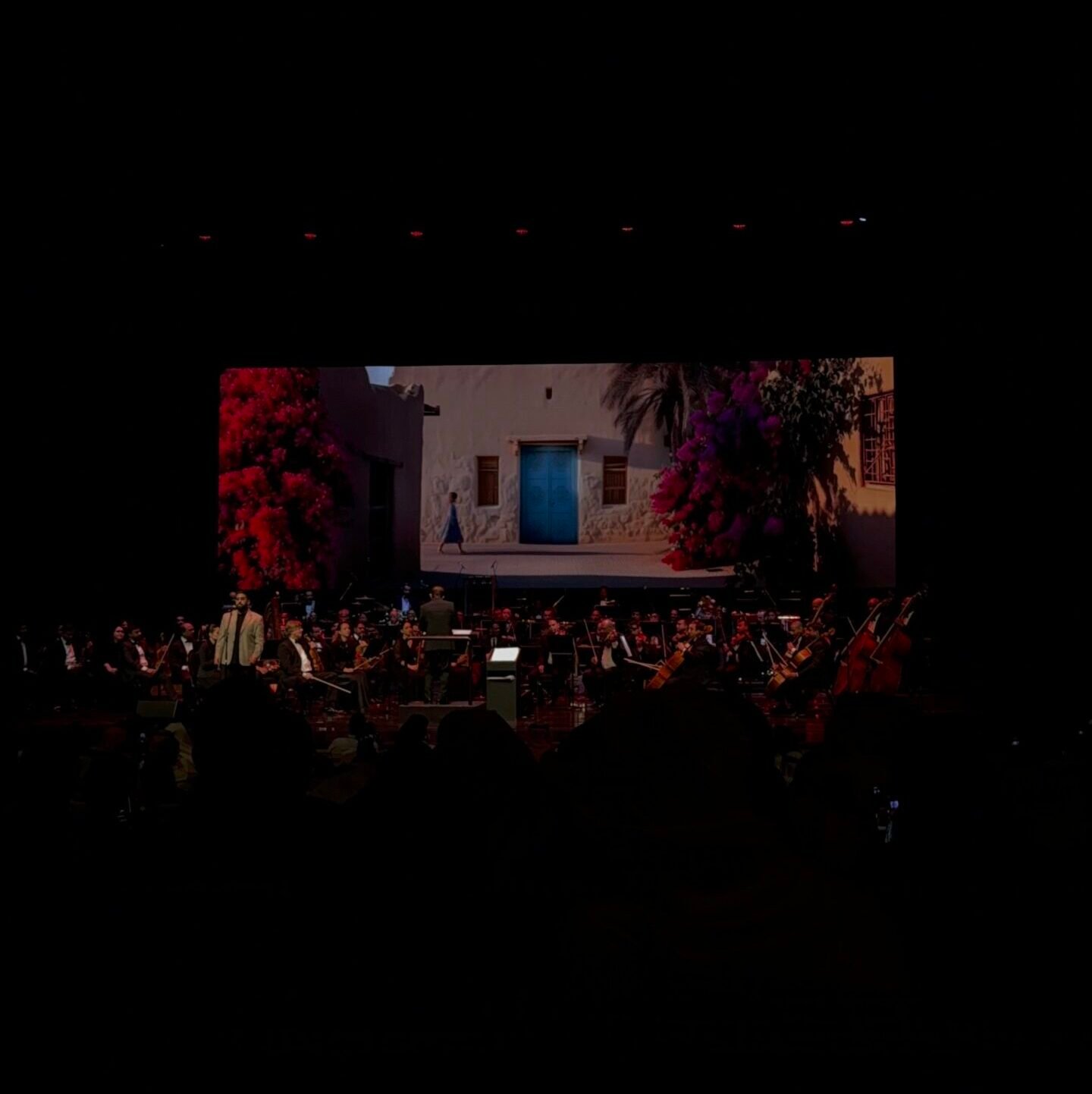

This project is an AI generated music video created for the “Yumma Warda” performance as part of an orchestra event. The video combines AI generated visuals with creative direction and editing to produce a visually rich and emotionally engaging experience that complements the music. It was designed to be displayed alongside a live performance, enhancing the overall atmosphere through synchronized visuals.

Category

AI Generated Video

Client

Dr. Mubarak Najem

Date

October 2025

By

Sara Alsubait - Jood Al Mahmood

THE goal &

objective

The main goal was to create a unique visual experience that enhances the orchestra performance by translating the music into expressive and meaningful visuals.

The objective was to explore the use of AI in creative production, while ensuring the visuals align with the rhythm, mood, and cultural tone of the song.

THE STORY

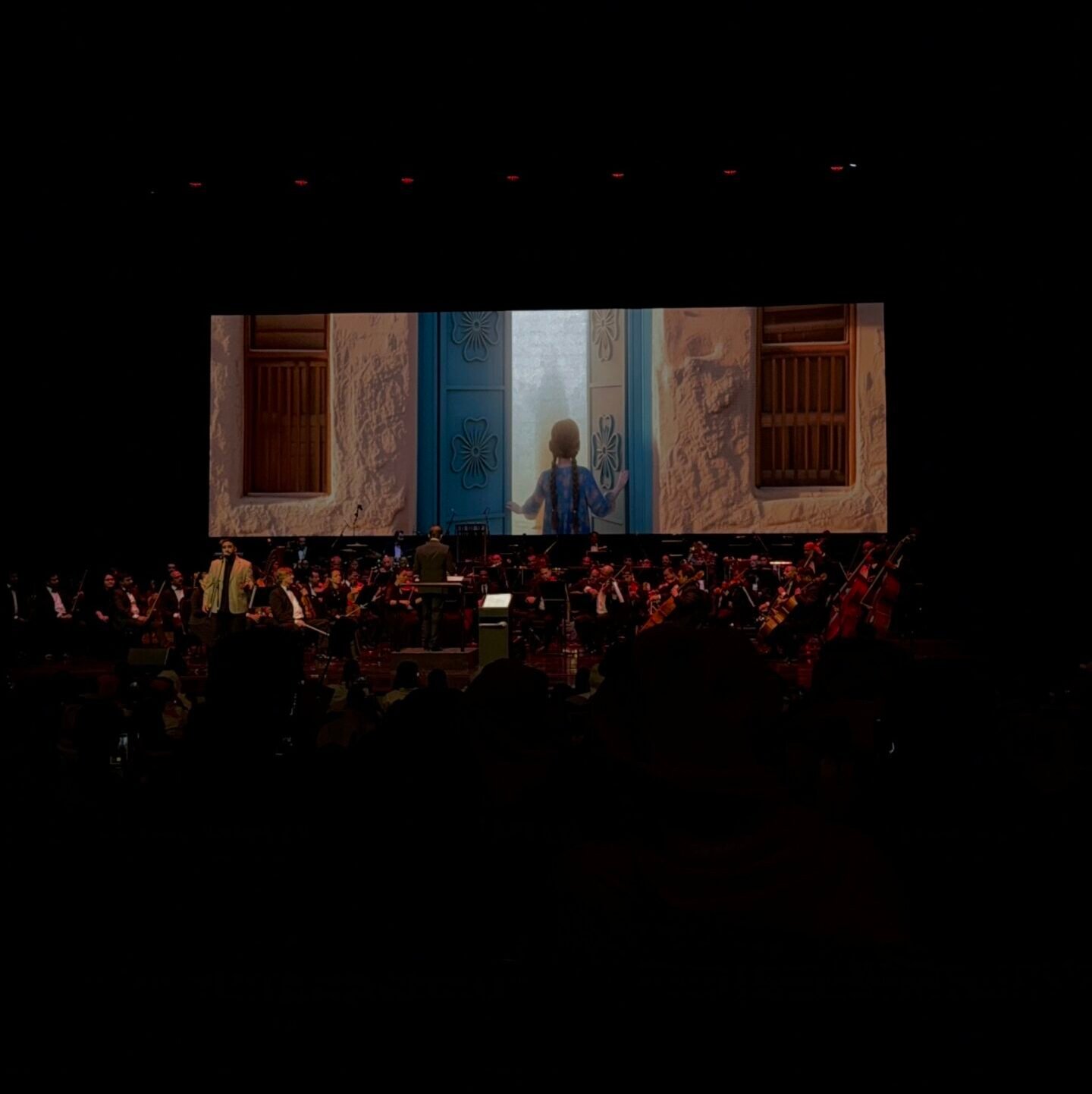

The video follows a visual interpretation of the song “Yumma Warda”, where imagery is used to reflect emotion, atmosphere, and meaning rather than a direct narrative. Instead of telling a linear story, the visuals translate the music into expressive scenes that evolve with its rhythm and tone. Each moment is designed to capture a feeling, allowing the audience to experience the music through visuals.

As the song progresses, the visuals shift between calm, reflective scenes and more dynamic, expressive sequences. This variation creates a natural flow that mirrors the structure of the music, where transitions, pacing, and composition work together to build an immersive experience.

The storytelling relies on visual symbolism and cinematic editing, inviting the audience to interpret the video in their own way. Rather than guiding the viewer through a fixed storyline, the video creates a sensory journey that connects sound with imagery, resulting in a cohesive and engaging visual experience that enhances the overall performance.

OUR APPROACH

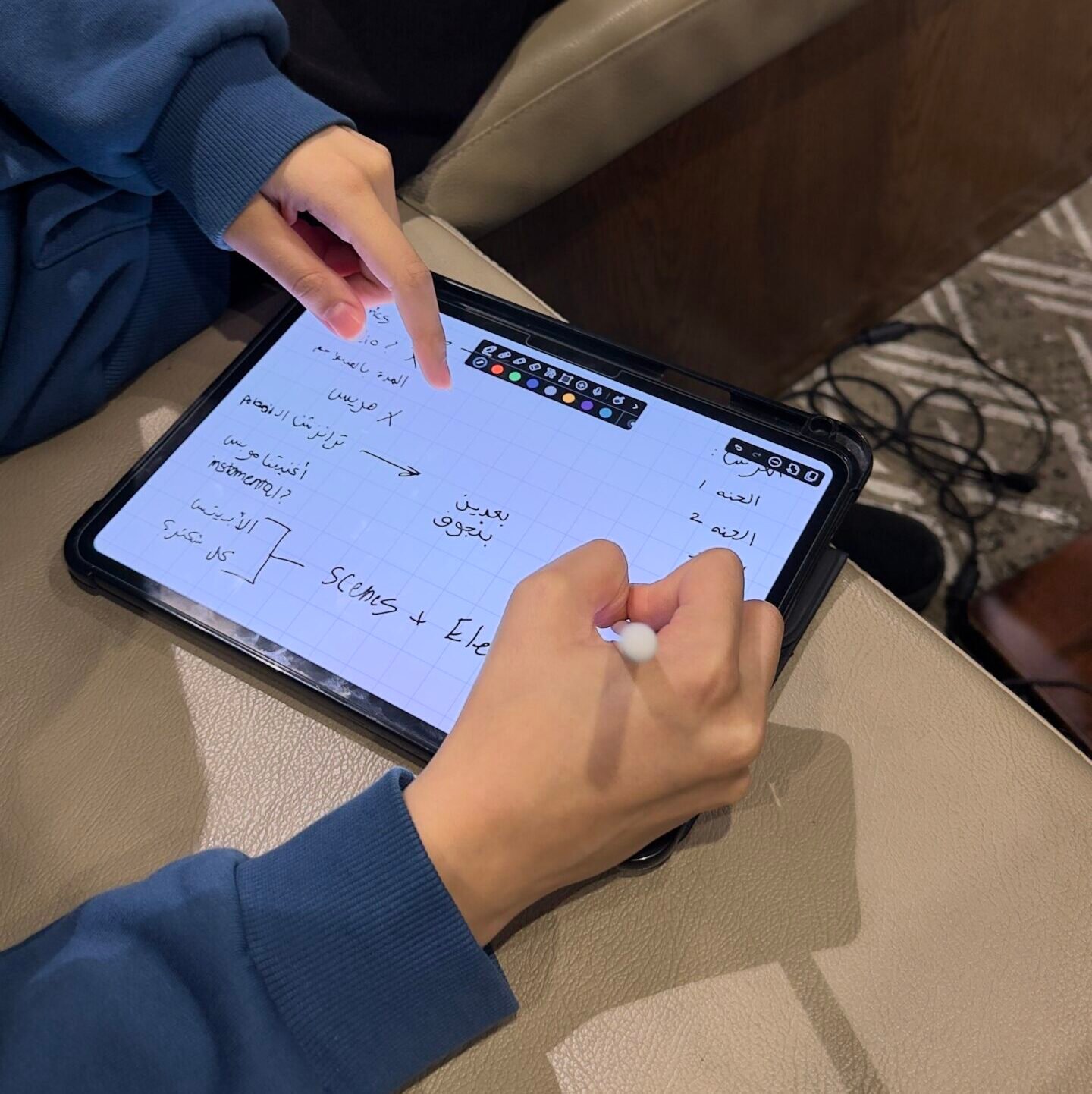

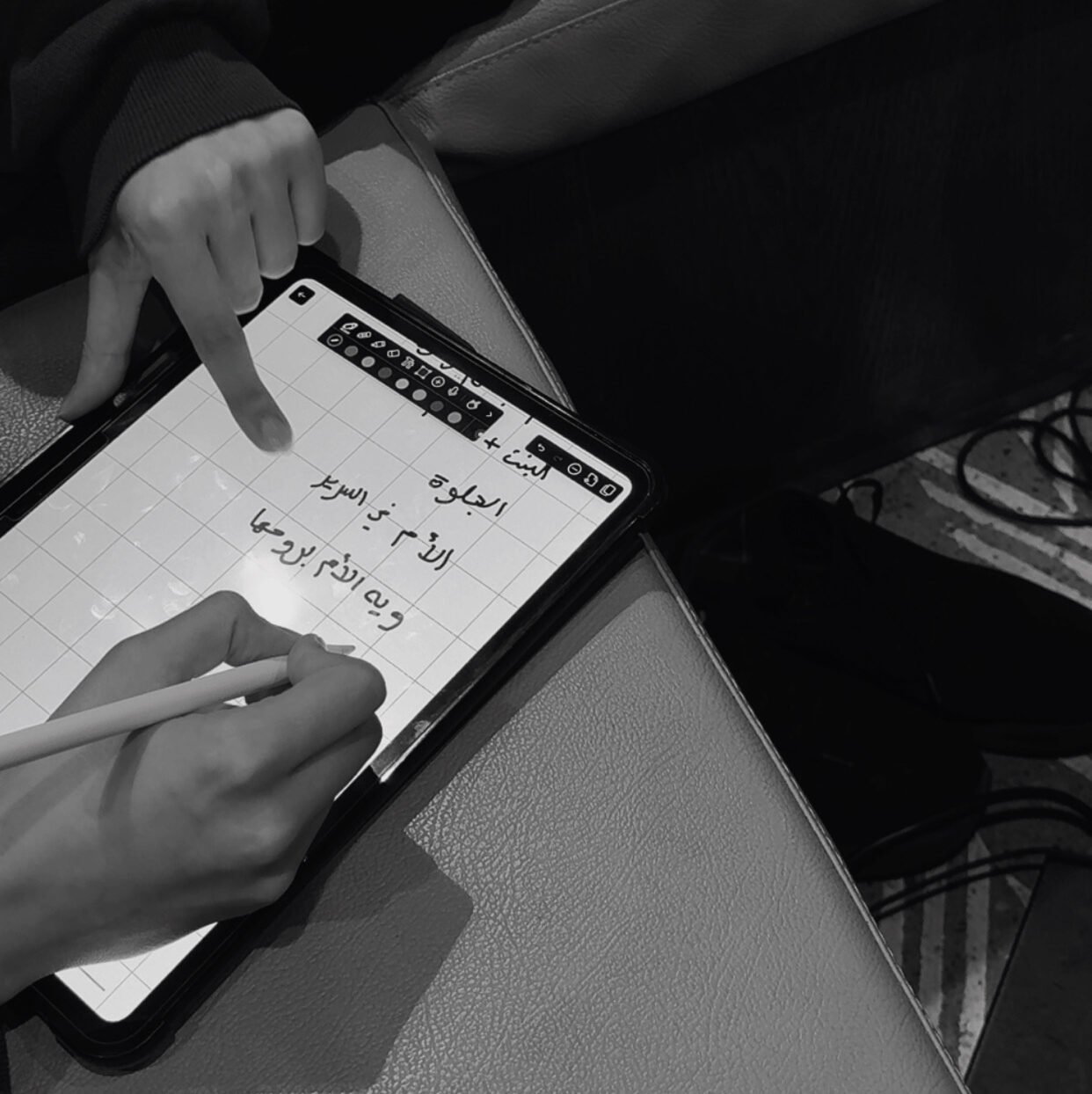

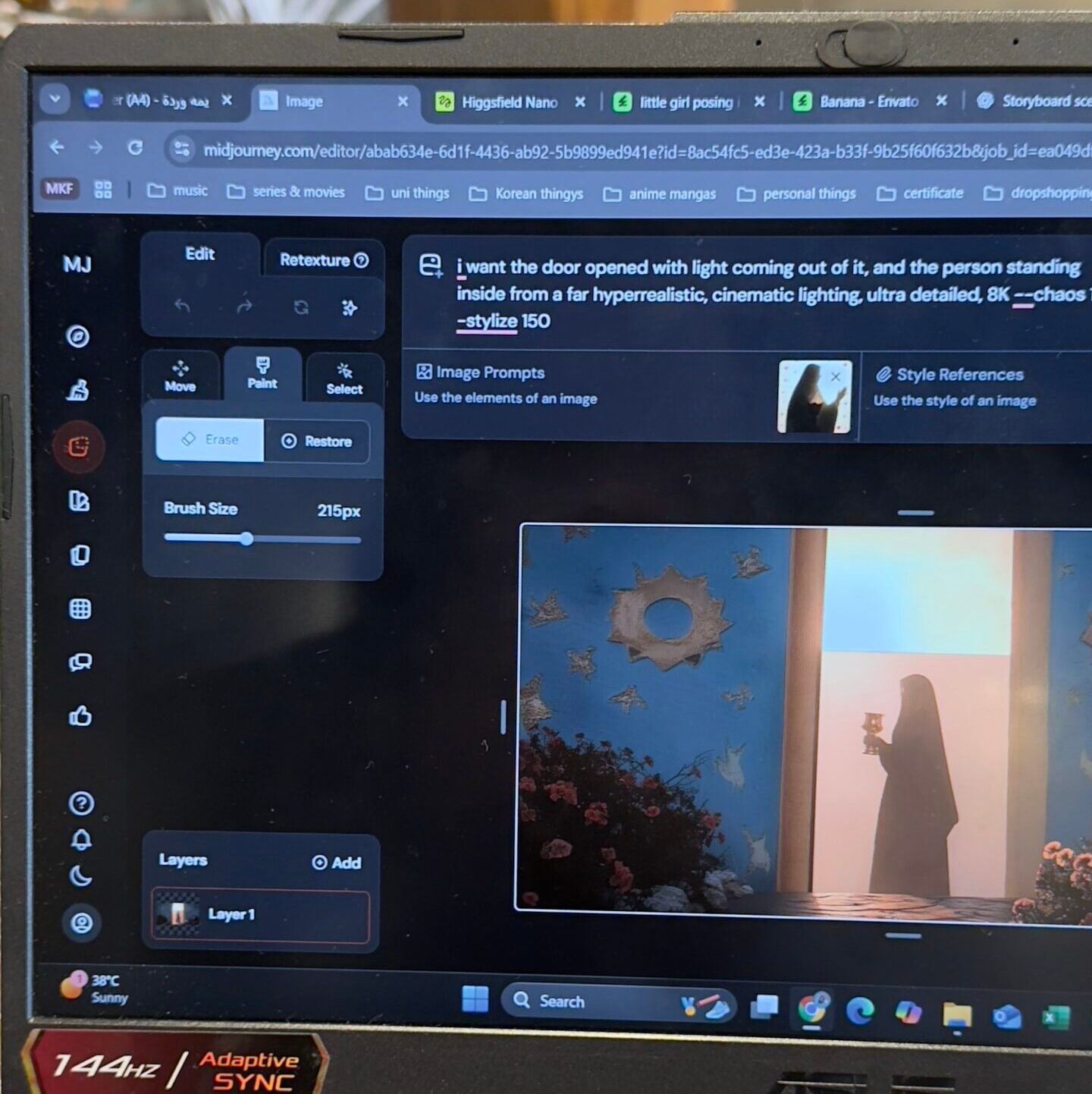

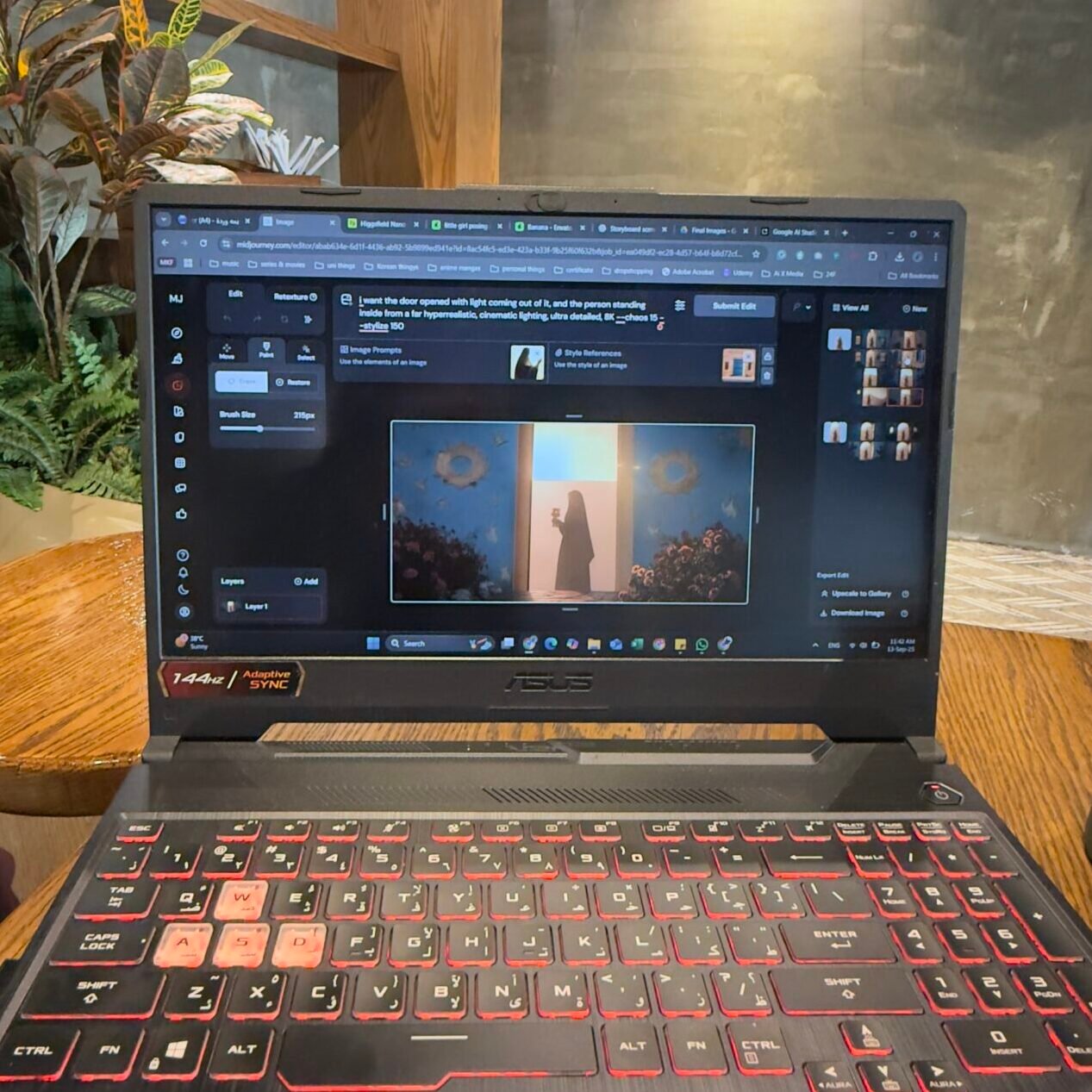

The project followed a structured creative workflow, starting with ideation and research to understand the mood, theme, and direction of the music. This was followed by brainstorming and storyboarding to plan the visual flow and ensure a clear connection between the music and imagery.

The production stage involved generating images using Midjourney, which were then transformed into video sequences using tools such as Higgsfield and Kling. Additional visual elements and effects were sourced from Envato Elements to enhance the overall quality and cinematic feel.

In the final stage, all assets were assembled and refined in Adobe Premiere Pro, where editing focused on synchronization with the music, smooth transitions, and maintaining visual consistency. This structured approach allowed for both creative exploration and controlled execution, resulting in a cohesive and immersive final video.

the outcome

The final video successfully enhanced the orchestra performance by adding a visual storytelling layer, creating a more immersive audience experience. This project also contributed to expanding my experience in AI driven media production and working on real world creative events.